Here's the thing about certainty: it compounds. Every day that nothing goes wrong, our confidence in tomorrow grows slightly stronger. We call this "learning from experience." Taleb calls it a trap.

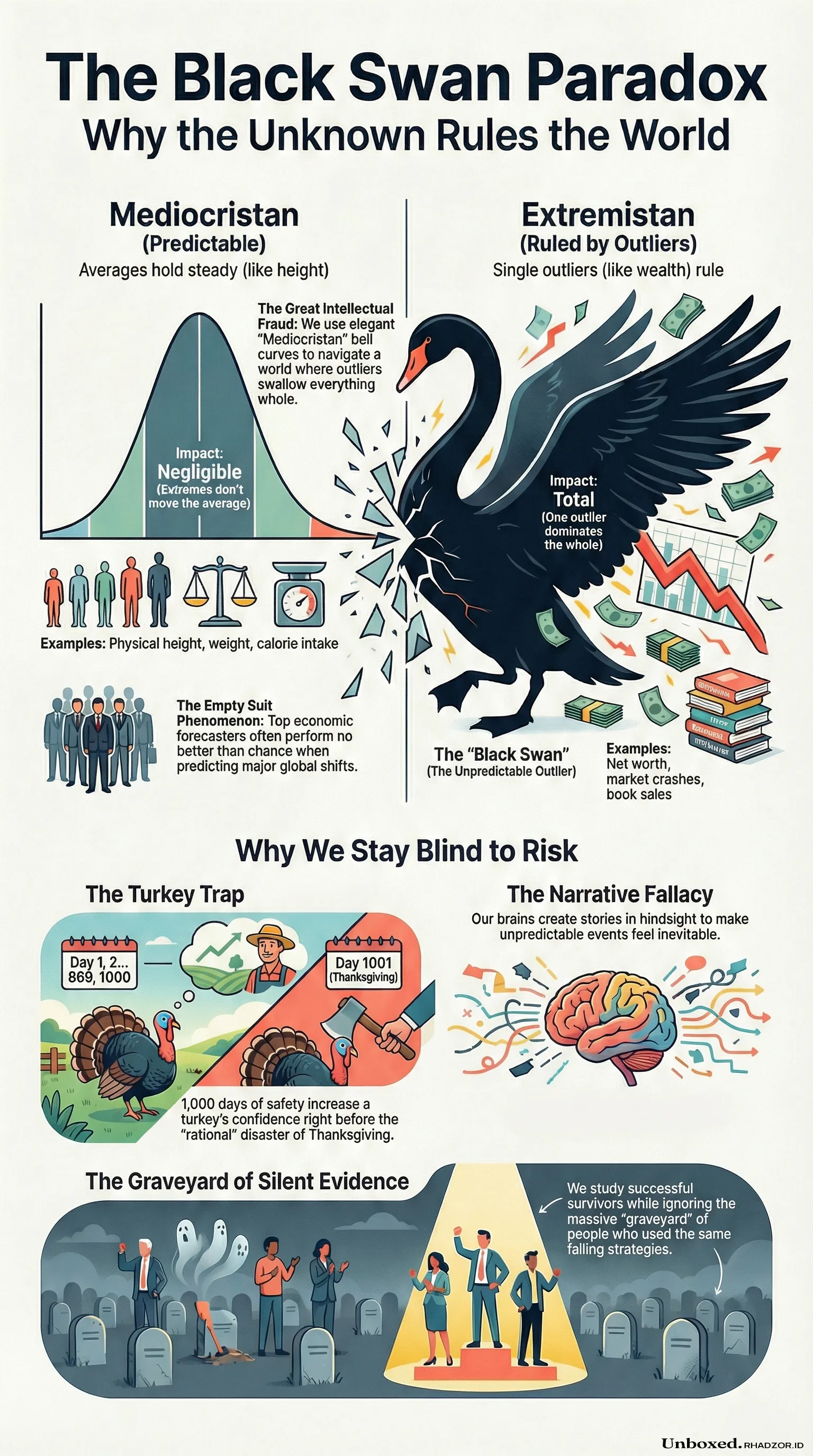

His image of the turkey — fed daily for a thousand days, growing more statistically certain of human kindness with each sunrise — is the kind of analogy that lodges itself somewhere uncomfortable in your brain. Not because it's clever. Because you recognize yourself in it. You recognize institutions in it. You recognize entire economies in it. The turkey on day 1,000 isn't naive. It's *rational*, by every conventional measure. That's what makes Thanksgiving so brutal.

---

Taleb splits the world into two domains, and this distinction alone is worth the price of the book. *Mediocristan* is the realm where extremes don't matter much — add the tallest person alive to a room of a thousand, and the average height barely moves. *Extremistan* is where one outlier swallows everything else whole. Drag a single billionaire into that same room and the average net worth becomes a fiction that describes no one.

The problem isn't that Extremistan exists. The problem is that we keep using Mediocristan tools to navigate it. Elegant bell curves. Confident economic forecasts. Risk models that look precise because they're mathematical. They work beautifully — right up until they don't. And when they fail in Extremistan, they don't fail small. They fail in ways that erase retirement savings, destabilize governments, and get quietly explained away by the same experts who built the models.

Taleb calls this *the great intellectual fraud*. Not a calculation error. An epistemological one. We weren't doing the wrong math — we were asking the wrong questions entirely.

---

Pause here for a second.

The most respected forecasters in economics and social science — the ones with credentials, the conference keynotes, the expensive suits — perform no better than chance when predicting major events. Taleb calls them *empty suits*, and what's maddening isn't the incompetence. It's that the apparatus of authority remains fully intact regardless of track record. A financial analyst who missed 2008 was back on television explaining 2009. The costume of expertise outlasts its actual content.

But blaming the experts is too easy. The deeper issue is biological. Human brains are narrative machines. We don't perceive raw facts — we perceive stories. After every Black Swan, the explanations arrive quickly and confidently, each one making the event sound almost inevitable in hindsight. The signs were there, people say. You just had to look. What they don't mention is that nobody looked, because our pattern-recognition systems are calibrated for familiar threats, not novel ones. We're very good at fighting the last war.

---

What unsettled me more than anything else is something Taleb calls *silent evidence*. We study successful entrepreneurs and extract their principles — their discipline, their risk tolerance, their vision. What we never study is the graveyard of people who had the exact same traits, the exact same work ethic, the exact same strategy, and simply didn't get the lucky break that made the difference. They're not in the case studies. They're not on the podcast. They're invisible by design, because failure doesn't get a book deal.

So we build philosophies of success on a sample that's been filtered by survival, then wonder why the advice doesn't always work.

*"A life saved is a statistic; a person hurt is an anecdote. Statistics are invisible; anecdotes are salient."* This line hit differently than I expected. It's not just about risk perception. It's about how we construct reality from what we can see, and quietly ignore everything we can't.

---

There's one more paradox worth sitting with. The person who quietly prevents a disaster — who passes the security law before the attack, who redesigns the system before the collapse — receives no recognition, because nothing happened. The hero is invisible precisely because they succeeded. Meanwhile, we celebrate the people who manage the wreckage of preventable catastrophes, because wreckage is visible and rescue is cinematic.

This isn't just an observation about public perception. It's a structural incentive problem. It means the rational career move, in many systems, is to let things break and then fix them loudly — not to prevent the break quietly. Black Swans don't just expose fragility. They expose who benefits from it.

---

Closing the book doesn't really close it. That's the honest summary.

Taleb doesn't offer a clean exit. There's no framework that makes you immune, no checklist that keeps the unknown at bay. What he leaves you with is closer to a calibrated unease — an awareness that the map is probably wrong, that the model is probably missing something catastrophic, and that the most dangerous moment is the one where everything feels most stable.

The turkey doesn't survive by predicting Thanksgiving. But maybe it helps to stop mistaking a full food bowl for proof that the world is on your side.