Here's something that stuck with me long after I closed this book: a group of ordinary people — retired programmers, social workers, stay-at-home parents — sitting at home with nothing but an internet connection, consistently outperformed professional intelligence analysts who had access to classified government information. People with $50 billion budgets, global spy networks, and the highest security clearances. Beaten by someone doing research between lunch and a Netflix queue.

That's not a metaphor. That actually happened.

And it's not really about access to information. It's about how you think.

---

Tetlock spent decades tracking expert predictions — economists, political scientists, foreign policy analysts — and the results are, frankly, humbling. Their average accuracy? Roughly equivalent to a dart-throwing chimpanzee. Not because these people are stupid. Many of them are genuinely brilliant. But brilliance and accurate forecasting, it turns out, are not the same thing. Sometimes they're inversely related.

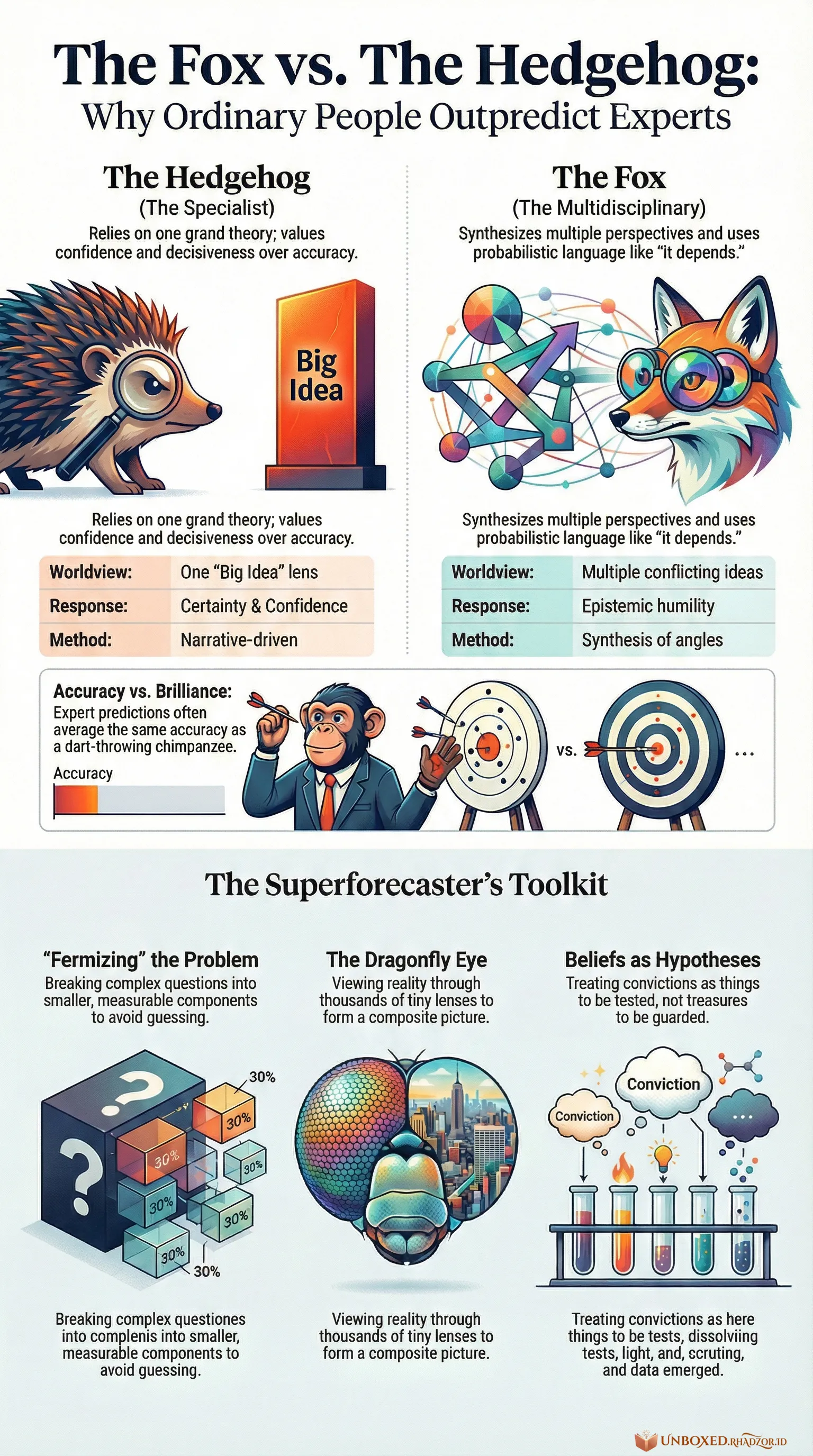

He calls it the Hedgehog vs. Fox divide. Hedgehogs see the world through one big lens — one grand theory, one ideological framework that explains everything. They're decisive, quotable, and perfect for a 90-second news segment. Foxes don't have a single narrative. They pull from everywhere, hold multiple conflicting ideas at once, and aren't embarrassed to say "it depends" or "I'd put that around 60%."

Media loves Hedgehogs. We all love Hedgehogs. Certainty is addictive, and Hedgehogs are dealers.

But when you actually measure their predictions over time? The boring Fox wins. Every time.

---

There's a quiet irony running through the whole book that I couldn't shake. The traits that make someone compelling — confidence, a clear worldview, an unwillingness to backtrack — are almost exactly the traits that make someone a bad forecaster. And the traits that make someone a good forecaster — epistemic humility, constant revision, comfort with ambiguity — make them genuinely terrible television.

So the feedback loop is broken by design. The people most visible to us are the least accurate. And because we never actually track their accuracy, they never face consequences for being wrong. They just pivot to the next confident prediction.

Think about the last time you saw a pundit publicly reckon with a prediction they got badly wrong. Not deflect. Not reframe. Actually reckon with it.

Take a second with that.

---

The book introduces what it calls *superforecasters* — people who, through discipline and method, are genuinely better at predicting future events than chance, and better than most experts. One of their core habits is something Tetlock calls "Fermizing" a problem: breaking a complex question into smaller, measurable components. Not answering "will this country's economy collapse?" but instead: what's the base rate of economies collapsing under these conditions? What's different this time? What am I not accounting for?

It's not glamorous. It looks like someone doing homework, not someone having a revelation.

There's also this image I keep coming back to — the dragonfly eye. A dragonfly doesn't have one sharp, focused lens like humans do. It has thousands of tiny lenses forming a composite picture of reality. Superforecasters work the same way: not one grand theory, but a synthesis of dozens of angles, constantly updated. It's less satisfying than a single sharp insight. But it's far more accurate.

---

What unsettled me most wasn't the part about experts or media — that's an easy target. What unsettled me was the personal implication. We all carry narratives about how the world works, about who we are, about what's going to happen. And we tend to protect those narratives rather than test them.

*"Beliefs are hypotheses to be tested, not treasures to be guarded."*

That line lands differently once you start counting how many of your own beliefs have never really been tested — just inherited, reinforced, and defended.

Being a Fox is uncomfortable in a way that's hard to overstate. It means living in a permanent state of "I might be wrong about this" — not as a philosophical pose, but as an actual operating condition. You don't get the psychological comfort of a clean worldview. You don't get to be the confident voice in the room. You just get to be slightly more accurate, quietly, over time.

Most people will choose the clean worldview. I understand why.

---

I don't know if reading this made me a better forecaster. Probably not immediately. But it did change something about how I listen to confident people — including myself. There's a reflex now, somewhere between skepticism and curiosity, that kicks in whenever someone speaks about the future without any acknowledgment of uncertainty.

*"Nothing is one hundred percent,"* Leon Panetta once said. And he ran the CIA.

The least I can do is try to remember that.

Though honestly — I'd give it about 65% odds that I forget by next week.